There’s a strange feeling that comes over you when you watch a Youtube video clip like this one. Part of you laughs at how clumsy it all looks. Another part of you feels a small knot forming in your stomach, because you know this is only the early stage of something that will grow sharper and harder to spot. And perhaps the most unsettling part is realising how quickly people adapt to it, almost without noticing.

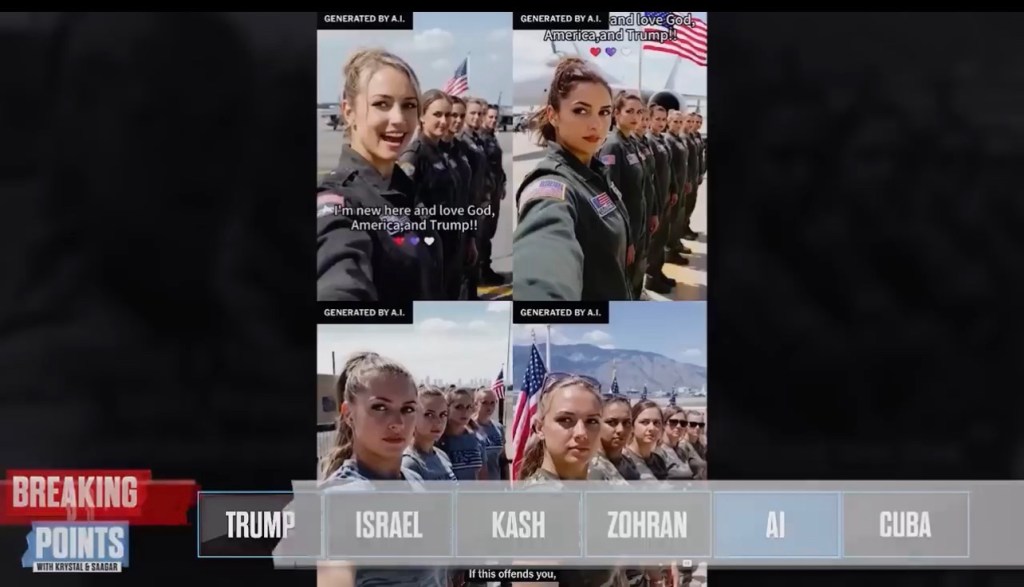

The video shows a row of AI‑generated women, all styled as if they’ve stepped out of a recruitment poster. They repeat the same line, with the same odd rhythm, as if someone pressed copy and paste on their personalities. It’s so stiff that you almost want to look away. Yet this is already being used to push political messages, and that alone should make us pause for a moment.

What stays with me is not the artificial faces. It’s the idea that someone, somewhere, believes this is a workable substitute for real public support. When you reach the point where you need to manufacture people to echo your views, you’re not building a movement. You’re filling a void.

And still, I think the bigger danger sits elsewhere. It’s in the way people now dismiss real events by calling them fake. The transcript mentions a journalist verifying a genuine incident, sharing it, and still being told it was AI. Even after official confirmation, some insisted it wasn’t real. That part hit me, because I’ve seen the same pattern in other contexts. Once someone decides that a fact is too uncomfortable to accept, AI becomes the perfect excuse to push it away.

This is where things get messy. If every uncomfortable truth can be waved off as synthetic, then the ground beneath public debate starts to crumble. You can’t have a serious conversation when half the room insists the table isn’t real. And you can’t measure public sentiment when the loudest voices might not even exist.

The presenters in the clip talk about how humans now behave like bots. They’re right. You see it every day. Short, clipped statements. Recycled lines. People posting things they barely seem to believe themselves. It’s as if the culture has absorbed the habits of automation. And once that happens, spotting the difference between a real person and a generated one becomes less about skill and more about instinct.

There’s also a quiet sadness in the way creative industries are being reshaped. Hollywood, programming, media — all feeling the pressure. Jobs thinning out. Skills being pushed aside. It’s not the first time technology has changed a sector, but the speed of this shift leaves little room for people to adjust. You can sense the anxiety in the transcript, and it’s not misplaced.

I keep coming back to one simple thought. If we lose the ability to agree on what is real, then everything else becomes noise. You can’t build policy on noise. You can’t build trust on noise. And you definitely can’t build a democracy on noise.

Perhaps the real challenge now is learning how to stay grounded when the information space feels like it’s drifting away from us. It’s not about rejecting technology. It’s about refusing to surrender our sense of reality to it. And that starts with something as basic as slowing down, checking sources, and remembering that not every image or clip deserves our immediate belief.

It’s not a perfect solution. But it’s a place to stand while the ground shifts.